We act like algorithmic bias is some new digital-age problem, as if neutral information access was the natural state of things until Silicon Valley came along and messed it up. But humans have never had neutral access to information. Ever. Someone has always been deciding what you get to know, and they've always had reasons that had nothing to do with giving you the best possible answer.

The Library of Alexandria—that legendary beacon of ancient learning—wasn't some democratic repository where all knowledge was preserved equally. It was run by people with agendas, budgets, and blind spots. The head librarians, appointed by the Ptolemaic rulers, made choices about which texts to acquire, copy, and preserve based on what served Egyptian political interests, what fit their intellectual frameworks, and frankly, what they personally found interesting.

Photo: Library of Alexandria, via dailydosedocumentary.com

Photo: Library of Alexandria, via dailydosedocumentary.com

The Original Content Moderators

When ships docked in Alexandria's harbor, officials would confiscate any scrolls they found, make copies, and return the copies while keeping the originals. Sounds like preservation, right? Except they weren't grabbing everything. They prioritized Greek texts over Egyptian ones, scientific works over popular literature, and anything that made Alexandria look like the intellectual center of the world.

The result? Entire categories of human knowledge—local histories, folk traditions, practical guides written by working people—simply vanished because they didn't make the cut. We know about Aristotle's thoughts on politics but not what ordinary Alexandrians thought about their daily lives, not because the common people weren't writing, but because their writing wasn't deemed worth the expensive papyrus and labor required to copy it.

Medieval monastery scriptoriums operated the same way. Monks spent years hand-copying texts, but they had to choose what was worth that enormous investment of time and materials. Religious texts got priority, obviously, but which religious texts? Which interpretations of scripture? Which commentaries?

The Abbey of Cluny preserved different works than the monasteries in Ireland, which preserved different works than the scriptoriums in Constantinople. Each community's theological leanings, political relationships, and local interests shaped what survived. Thomas Aquinas got copied extensively; the theological arguments of his opponents often didn't.

The Economics of Eternal Relevance

Here's what really hasn't changed: information preservation has always been expensive, and expensive processes create gatekeepers. In ancient times, it was the cost of papyrus, parchment, and scribal labor. Today, it's server farms, engineering salaries, and computational power. Different resources, same dynamic—someone has to decide what's worth the investment.

Google's PageRank algorithm might seem objective, but it's based on human assumptions about what makes information valuable: links from authoritative sites, fresh content, user engagement metrics. These aren't natural laws; they're editorial decisions baked into code. Just like when the librarians at Alexandria decided that Greek philosophical texts were more important than Egyptian agricultural manuals.

The Chinese imperial bureaucracy took this even further. During various dynasties, official historians would literally rewrite previous dynasties' records to make their current rulers look better. The Qing Dynasty systematically destroyed or altered Ming historical records. They weren't just controlling current information flows; they were retroactively editing the past.

The Curator's Dilemma Never Gets Solved

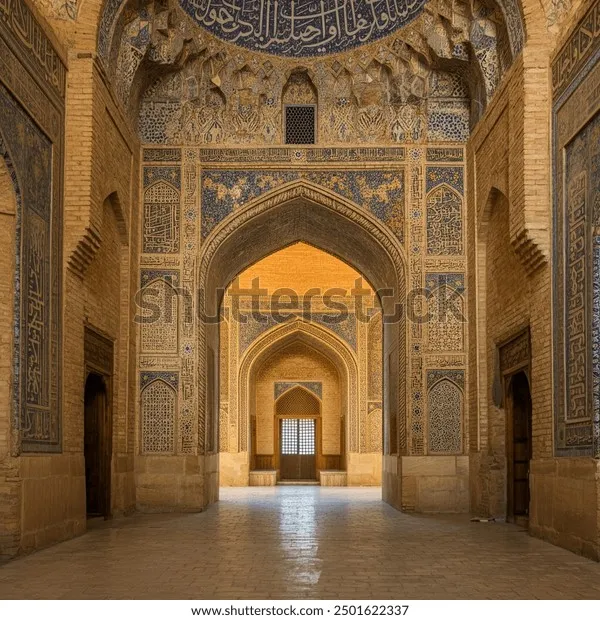

Every generation of information gatekeepers faces the same impossible choice: preserve everything (impossible) or make editorial decisions (biased). The scholars at the House of Wisdom in Baghdad tried to collect and translate works from every culture they could access—Greek, Persian, Indian, Chinese. But they still had to prioritize. Mathematics and astronomy got more attention than poetry. Medical texts were translated before historical chronicles.

Photo: House of Wisdom, via www.shutterstock.com

Photo: House of Wisdom, via www.shutterstock.com

These weren't malicious choices. The Abbasid caliphs genuinely wanted to preserve human knowledge. But their definition of valuable knowledge reflected their interests: running an empire, advancing technology, understanding the natural world. Personal letters, folk songs, and everyday practical wisdom didn't make the cut.

Sound familiar? Facebook's algorithm prioritizes content that generates engagement. YouTube promotes videos that keep people watching. Twitter amplifies tweets that get retweeted. These aren't neutral measurements of quality; they're specific definitions of value that reflect what these platforms need to survive as businesses.

The Invisible Editorial Hand

The most effective information gatekeepers have always been the ones who made their editorial choices invisible. Ancient librarians didn't announce "We're not preserving working-class perspectives." They just quietly didn't copy those texts. Medieval monks didn't declare "We're suppressing theological diversity." They simply focused their limited copying resources on orthodox works.

Today's algorithms work the same way. Google doesn't tell you they're deprioritizing certain types of content; they just adjust the ranking factors. Social media platforms don't announce they're suppressing specific viewpoints; they modify engagement algorithms. The editorial hand remains hidden, making the results feel natural and inevitable.

The human psychology driving these decisions hasn't changed either. Ancient curators, like modern engineers, genuinely believed they were making neutral, beneficial choices. They weren't consciously trying to manipulate information access; they were trying to preserve the most important knowledge. The problem is that "important" always reflects the values and interests of whoever gets to decide.

Why This Pattern Never Breaks

Every information system in history has promised to be different. The printing press would democratize knowledge. Public libraries would provide equal access. The internet would let everyone publish. Search engines would organize the world's information objectively.

But the fundamental constraint never changes: infinite information meets finite human attention. Someone always has to filter, and filters always reflect the biases of their creators. The librarians of Alexandria, the monks of medieval Europe, and the engineers of Silicon Valley all faced the same problem and reached for the same solution—editorial judgment disguised as neutral curation.

The next time you're frustrated that you can't find something online, or that search results seem skewed, remember: you're not experiencing a modern failure of technology. You're experiencing the same information gatekeeping that's shaped human knowledge for five thousand years. The only difference is that today's gatekeepers write code instead of copying scrolls by hand.